- tl;dr sec

- Posts

- [tl;dr sec] #319 - AI is Eating Security, BSidesSF & RSA, Claude Finds Firefox 0-days

[tl;dr sec] #319 - AI is Eating Security, BSidesSF & RSA, Claude Finds Firefox 0-days

What does security look like in 5 years? Let's hang out in San Francisco and avoid badge scans, Opus 4.6 finds 22 vulns and auto-writes 2 exploits

Hey there,

I hope you’ve been doing well!

🌉 BSidesSF and RSA

As the gentle blooming of flowers announces spring, and piles of orange leaves welcome fall, so too does the torrential downpour of security vendor emails and LinkedIn DMs “just touching base” herald the arrival of… RSA!

It’d be great to cross paths if you’re in town. Here’s what I’m up to:

Pre-BSidesSF - Friday March 20th - tl;dr sec Community Kickoff. tl;dr sec’s first community event 😍 I’m excited about it, there’s going to be cool people, I’ve artisanally curated local SF food options, and we’ll have a fireside chat about AI and security builders.

We’ll also have tl;dr sec t-shirts and a totally new, never before seen sticker…

If you can’t make it, you can try to get a t-shirt or stickers by a) finding me or b) Semgrep at another event.

BSidesSF - I’m joining my friends on an Absolute AppSec panel, and will generally be around.

Also check out talks by my colleagues Claudio and Romain on scaling SAST rule writing with AI, Katie Paxton-Fear (InsiderPhD) in a birds of a feather discussion on AI, or Brandon Wu on how to combine AI and static analysis to process CVEs at scale.

RSA - I’m organizing a mini con / lightning talks (Unsupervised + Unhinged) with Daniel Miessler and Decibel. Wed March 25 10am - noon. You can register for their overall program here. More info coming soon!

Also, I’ve made it ma, my name is on an announcement graphic alongside The Chainsmokers 😂 😂

Sponsor

📣 Securing AI Agents 101

AI agents are changing how work gets done. They take on tasks, orchestrate tools, and drive outcomes across environments.

Securing AI Agents 101 is a one-page resource to help teams build a clear understanding of what AI agents are, how they operate, and where key security considerations show up.

Inside, you’ll find:

What makes an AI agent different from traditional tools

Top risks to watch, from shadow AI to excessive permissions

Four key questions to assess agent usage and exposure

Download the security flashcard and get up to speed quickly.

Things are progressing so fast in this space, great to have a one pager to quickly get up to speed 👍️

AppSec

1Password/load-secrets-action

Load secrets from 1Password into your GitHub Actions jobs using Service Accounts or 1Password Connect.

Please, please, please stop using passkeys for encrypting user data

Tim Cappalli warns companies against using WebAuthn's PRF (Pseudo-Random Function) extension to derive encryption keys for user data, because it couples authentication credentials with data encryption in ways users don't understand. When users delete a passkey from credential managers like Apple Passwords, Google Password Manager, or Bitwarden, they receive no warning that they're permanently destroying access to encrypted photos, message backups, documents, crypto wallets, or other critical data.

Superuser Gateway: Guardrails for Privileged Command Execution

Uber’s Pavi Subenderan and Jyoti Grewal describe Superuser Gateway, a system built to replace direct superuser CLI access with a peer-reviewed, auditable workflow for dangerous operations on production systems (e.g. Google Cloud Storage, OCI, and HDFS). Engineers now submit commands via superuser-cli, which generates a PR in a Git repository where automated CI jobs perform syntax validation, permission checks, and impact estimation (like calculating files affected by rm -r), before a peer approves and a backend service executes the command remotely. This architecture removes superuser credentials from individual engineers' machines entirely, ensuring all privileged operations flow through mandatory peer review while maintaining operational velocity for on-call scenarios.

Sponsor

🚨 Most Confident Organizations Have 2x Higher AI Incident Rates 🚨

Counterintuitive finding from 205 security leaders: organizations most confident in their AI deployments experienced 2x the incident rate of less confident peers. Meanwhile, 43% report AI making infrastructure changes monthly without oversight, and 7% don't even track autonomous changes at all.

Hm I’m curious about (the lack of) tracking autonomous changes. Also “3 in 5 orgs have had or suspect an AI-related incident.” 🤔 Identity is still key.

Cloud Security

Untangling Microsoft Graph's $batch requests in Burp

Requests to Microsoft Graph’s $batch endpoint bundle several API calls into one JSON object, which makes analyzing Azure Portal traffic difficult, since underlying API calls for requests to the $batch endpoint are not individually logged. Katie Knowles has released the graph_batch_parser.py Burp Suite extension to speed up analysis of $batch requests. The extension processes $batch requests into a set of synthetic request/response pairs that can then be reviewed in the “Graph Batch” tab, as well as Burp’s Site Map.

Stop Enabling Every AWS Security Service

Sena Yakut argues against enabling every AWS security service at once, advocating instead for a risk-based approach that starts with threat modeling your architecture (what are the risks we need to control for?), understanding team behaviors (who has admin privileges? Are there shared accounts or credentials?), and identifying critical breaking points (where small mistakes can cause major damage) before selecting controls. Avoid service overlap with existing third-party tools (like SIEMs) so you’re not overwhelmed by alerts, and evaluate usage-based pricing- based on your environment, certain managed services might not fit within your budget, but building custom automations with Lambda and EventBridge can fulfill a similar purpose. Use AWS SSO (IAM Identity Center) over individual IAM users.

Blue Team

MatheuZSecurity/ksentinel

By MatheuZ: A Linux kernel module that monitors syscall table integrity and critical kernel functions to detect rootkit modifications like ftrace hooks, kprobes, and syscall table hijacking.

Coruna: The Mysterious Journey of a Powerful iOS Exploit Kit

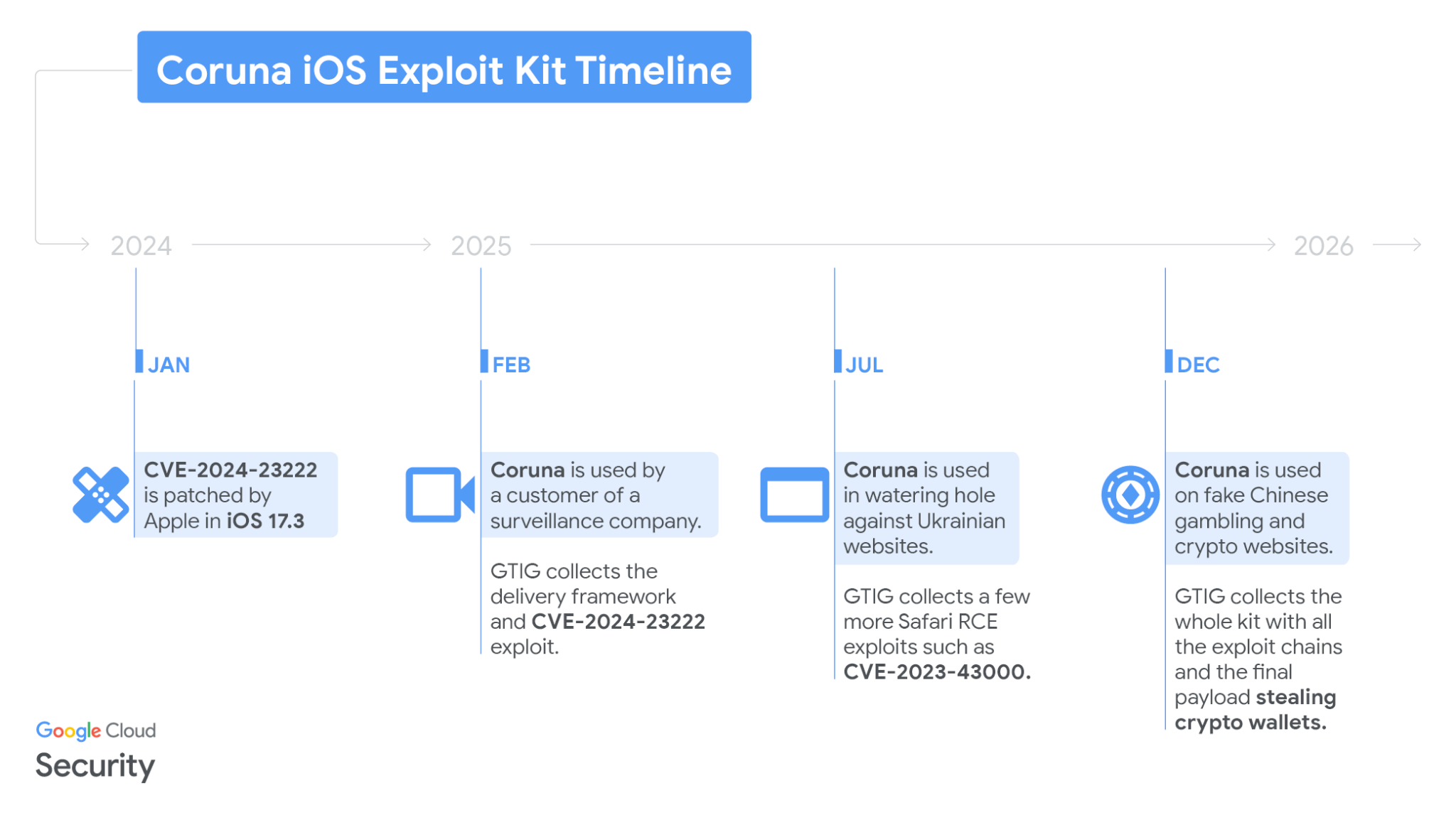

Google's Google Threat Intelligence Group (GTIG) discovered the "Coruna" iOS exploit kit containing five full exploit chains and 23 exploits targeting iOS 13.0 through 17.2.1, initially used by a surveillance vendor customer, then by Russian espionage group UNC6353 in watering hole attacks against Ukrainian users, and finally by Chinese financially-motivated actor UNC6691 in broad campaigns.

Additional technical analysis and IOCs by iVerify in their write-up here.

💡 Word on the street is that these exploits may be from the Trenchant guy who sold the iOS exploit chain to a Russian exploit broker, which then proliferated to these other groups.

Detection Pipeline Maturity Model

Scott Plastine presents a five-level maturity model for detection pipelines, progressing from analysts manually checking security consoles (None) to a risk-based correlation engine that aggregates both commercial security tools and custom telemetry analytics (Standard+). The model highlights the differences between closed-source security tool analytics (endpoint tools like CrowdStrike, cloud tools like AWS GuardDuty) and custom analytics built on raw telemetry (Windows Event Logs, AWS CloudTrail), and recommends routing all detections through a risk engine that scores and correlates events across assets and users before alerting.

Advanced maturity adds atomic high-fidelity detections and risk-based custom rules, while Leading maturity incorporates data science-backed outlier detection (using platforms like Databricks or Dataiku) and deception techniques like honeytokens. Scott recommends reducing reliance on unmeasurable closed-source analytics by lowering their risk scores in the correlation engine while building validated custom detections that adversaries can't test against.

Red Team

0xbbuddha/notion

A Mythic C2 profile that uses Notion as a covert communication channel. Agents communicate by reading/writing pages in a shared Notion database, making C2 traffic indistinguishable from normal SaaS usage — a Living off Trusted Sites (LoTS) technique.

vulhunt-re/vulhunt

BINARLY released VulHunt Community Edition, an open-source vulnerability hunting framework for analyzing software binaries and UEFI firmware, built on their Binary Analysis and Inspection System (BIAS).

See also their community-contributed rules repo (currently only 3 rules) and Skills repo, which contains skills for decompiling functions, finding functions or call sites, performing data flow analysis, searching code/byte patterns, etc. powered by VulHunt MCP tools.

💡 Also congrats to my friend Gwen Castro who recently became CEO of BINARLY 🥳

AI + Security

Quicklinks

RankClaw - Web app to scan AI skills for security risks before you install them.

Securing AI Agents in the Enterprise: 5 Use Cases - AI agents are now in enterprise environments; running workflows, accessing data, and interacting with other services through roles, tokens, and service accounts. This guide breaks down 5 use cases teams must solve to safely deploy agents across cloud and SaaS environments.*

The looming AI clownpocalypse - “What happens when we reach the tipping point where exploits become cheaper to autonomously develop than they yield on average?”

*Sponsored

Cybersecurity’s Need for Speed & Where To Find It

Phil Venables applies Stewart Brand's Pace Layers framework to cybersecurity, arguing that organizations must accelerate their security OODA loops to outpace AI-enabled attackers.

The post covers 11 areas we can speed up, including:

Streamline software delivery pipelines - fix faster with coding agents, Skill packs for threat modeling, security analysis, invariant maintenance.

Implement autonomic (not just automated) security operations.

Systematize threat intelligence into macro (strategic TTPs) and micro (IOCs/signatures) feeds.

"Shift down" security controls into lower platform layers for security-by-default.

Improve control reliability engineering to catch silent failures.

Use deception/moving target defenses to slow attackers while defenders speed up their response cycles.

AI is Eating Security: What does security look like in five years?

Great talk by Alex Stamos at Reddit’s SnooSec last week. I liked his slide on how AI has already impacted security teams across the SOC, security engineering, AppSec, DFIR and threat intel, etc. His high confidence predictions.

Smaller, narrower teams - Fewer, more senior people on top of AI agents.

Building on Legos - Companies will build on vendor-provided specialized components.

VulnOps - The speed of vuln discovery and exploitation means every company needs to worry about 0-days.

Humans will be supervising machine <> machine conflict.

"Five to ten years ago, only ~25 of the Fortune 500 had to seriously worry about 0-day. In 6-9 months it'll be everyone."

Codex Security: now in research preview

OpenAI announces Codex Security (the artist formerly known as Aardvark). “We’ve reduced the rate of findings with over-reported severity by more than 90%, and false positive rates on detections have fallen by more than 50% across all repositories.” Codex Security is rolling out in research preview to ChatGPT Pro, Enterprise, Business, and Edu customers via Codex web with free usage for the next month.

Codex works by first generating a threat model, searching for vulnerabilities, where possible testing the findings dynamically in sandboxed validation environments, and then attempts to patch issues in a way that aligns with system intent and surrounding behavior. “Over the last 30 days, Codex Security scanned more than 1.2 million commits across external repositories in our beta cohort, identifying 792 critical findings and 10,561 high-severity findings.” Codex Security found critical vulnerabilities in OpenSSH, GnuTLS, PHP, libssh, Chromium, and more.

💡This is cool work by a smart team. I’m going to point out a few things that aren’t clear from the stats shared though: “50% fewer false positives” - does that mean it went from tens of thousands to thousands of FPs? In other words, 50% fewer could still be a bad N. Also how are they calculating FPs and how do they know the FPs went down that percent, have humans triaged all the findings so there’s ground truth?

Regarding 792 critical and 10K high severity findings, that’s like reporting the number of findings directly out of your security scanner- yes it sounds good, but how many of those are true vs false positives? What vulnerability classes? How many repos and what was the tech stack breakdown of those repos? Are they actively maintained/popular?

Again, I’ve met the Codex Security team and they’re super sharp, I just think too often people read numbers like these and don’t think about them carefully, so I wanted to give examples of context that would be useful to know.

Partnering with Mozilla to improve Firefox’s security

Anthropic announces Claude Opus 4.6 autonomously found 22 vulnerabilities in Firefox over two weeks, 14 of which Mozilla assigned High severity. The team also tested (blog) if Claude could not only find vulnerabilities, but create exploits for them. They gave Claude a VM and a task verifier, and gave it 350 chances to succeed. Claude only successfully created working exploits in 2 of the attempts, costing $4,000 in API credits. Note also that the exploit only works within a testing environment that removes some of the security features of modern web browsers (e.g. it doesn’t escape the browser sandbox).

Takeaways: Claude is better at finding bugs than writing exploits (as of today). But frontier LLMs are able to write working exploits today for a pretty complicated bug and target (Firefox, a well-tested, modern browser).

Neat work by Anthropic’s Evyatar Ben Asher, Keane Lucas, Nicholas Carlini, Newton Cheng, and Daniel Freeman.

💡Pretty cool write-up of Claude’s process and methodology, worth a read. It’s great to see labs evaluating model capabilities across several axes (not just finding bugs, but writing exploits). This gives us defenders insight into the timelines and cost of the likely upcoming vulnpocalypse where the cost of findings bugs continues to decrease. I’ll also note once more the value of having a deterministic “verifier” in making agents much more effective.

Also: there’s a bunch we don’t know about this research: how much total was spent finding the bugs? How long did it take? What was the false positive rate? How much human triage time was spent? etc. etc.

[un]prompted

[un]prompted NotebookLM

The full transcripts and slides for every [un]prompted talk were uploaded to a NotebookLM so that you can query any of the source material. Awesome idea, love it. Great work by Rob T. Lee, Julie Michelle Morris, and Emanuel Gawrieh and Dragos Ruiu. Shout-out Gadi Evron for sharing.

From Source to Sink: How to Improve LLM First-Party Vuln Discovery

Excellent [un]prompted talk by my Netflix friends Scott Behrens and Justice Cassel in which they took a data driven approach to investigating a number of questions regarding using AI for finding vulnerabilities I’ve had for awhile like: is it better to have a single superagent or multiple more focused agents? Should the agent find and then triage issues or should there be a separate post-processing triage step?

They share the precision, recall, and cost (I want to see more people do this) of various approaches, an excellent architecture diagram on slide 23, and have released a railguard-skill GitHub repo with implementations of the various scanning approaches (including vulnerability finding skills!) and utilities for benchmarking.

💡 In my opinion this is a great example of research that contributes back to the security community: it tests a number of hypotheses (which architectures/approaches are most effective?) and puts hard data behind them AND shares the code and benchmarking scripts to reproduce it. More like this please 👏

How we made Trail of Bits AI-Native (so far)

This [un]prompted talk by Dan Guido is probably the best talk or resource I’ve seen on how to make your company AI-native. “AI isn't a feature you ‘adopt.’ It is a force that commoditizes effort and shortens the half-life of best practices… The core idea is a compounding operating system built from incentives, defaults, guardrails, and verification loops that let humans and autonomous agents ship high-rigor work at dramatically higher throughput. The talk covers the concrete artifacts that make this real: internal and external skills repositories, a curated marketplace for third-party skills, opinionated configuration baselines, and sandboxing patterns.”

GitHub link with bigger screenshots (AI Maturity Matrix), YouTube recording.

💡I especially like that Dan called out the resistance people have making this transition (Am I being replaced? What does it mean for my identity if AI can do parts of my job better than I can?) and how to support them.

The AI Maturity Matrix and measuring adoption slides as well as how to create an adoption engine via hackathons I thought were quite practical and actionable 👌

Misc

Misc

Dropout - Brennan Lee Mulligan thanks the entire Greek Pantheon

Privacy Activist Toolbox by Privacy Guides - A resource for anyone interested in becoming a better privacy rights activist, or anyone who wants to start advocating for privacy rights.

Chinese hackers likely compromised an FBI network used to manage wiretaps and intelligence surveillance warrants

An internal DHS document obtained by 404 Media shows for the first time Customs and Border Protection (CBP) used location data sourced from the online advertising industry to track phone locations. ICE has bought access to similar tools.

Meta’s new AR glasses are sending all sorts of sensitive videos to their human workforce in Kenya. “We see everything – from living rooms to naked bodies. Meta has that type of content in its databases. People can record themselves in the wrong way and not even know what they are recording…Clips that could trigger ‘enormous scandals’ if they were leaked.”

One annotator sums it up: “You think that if they knew about the extent of the data collection, no one would dare to use the glasses”.

Iranian Hacking Groups Go Dark During US, Israeli Military Strikes - “A popular Iranian prayer app, BadeSaba, was reportedly hijacked to tell its users that “help has arrived” and then urged Iranian army members to surrender. In the early hours of fighting, pro-regime news agencies were compromised and Iranian television stations were repurposed to broadcast videos of President Donald Trump and Israel’s Benjamin Netanyahu.”

Across party lines and industry, the verdict is the same: CISA is in trouble - The agency lost a third of its people in a year. Now industry and lawmakers on both sides say it's unprepared for a potential crisis. “If we got into a major conflict, let’s say, with China, and they start triggering Volt Typhoon-related malware, are we organized and ready to roll? I don’t think so.” “We’re asking states to do a job they’re not resourced to do, while weakening the one federal agency designed to help them.”

AI

🎶 Suno - So Much Drama in the Frontier Labs - Epic rap

Techdirt’s Mike Masnick on the recent OpenAI/Anthropic/U.S. government situation, and on the subtlety of what specific words actually mean from a legal and precedent point of view.

Bitter Lesson Engineering - Daniel Miessler argues that instead of telling AI how to do things, instead tell them what outcome you want.

googleworkspace/cli - One command-line tool for Drive, Gmail, Calendar, Sheets, Docs, Chat, Admin, and more. Dynamically built from Google Discovery Service. Includes AI agent skills.

You need to rewrite your CLI for AI agents - Justin Poehnelt on his thoughts and lessons learned building the Google Workspace CLI. Follow-up post: The MCP Abstraction Tax.

You can now run OpenClaw on AWS via Lightsail

FT - Amazon holds engineering meeting following AI-related outages - “There had been a ‘trend of incidents’ in recent months, characterized by a ‘high blast radius’ and ‘Gen-AI assisted changes’ among other factors. Junior and mid-level engineers will now require more senior engineers to sign off any AI-assisted changes.

Pawel Huryn on some additional backstory - In November 2025 Amazon mandated Kiro as their only AI coding tool and set an 80% weekly usage target.

✉️ Wrapping Up

Have questions, comments, or feedback? Just reply directly, I’d love to hear from you.

If you find this newsletter useful and know other people who would too, I'd really appreciate if you'd forward it to them 🙏

Thanks for reading!

Cheers,

Clint

P.S. Feel free to connect with me on LinkedIn 👋